Thoughts

The 5 Levels of AI Adoption

3 May 2026

When it comes to AI, we are not all equal. Some push it far where others barely touch. Five movements observed across training rooms and missions, that form a cycle, not a climb. At the heart, a hard truth: no skill is ever fully acquired.

Five levels of AI adoption, a mountain to climb?

Five movements to master AI.

A Tuesday morning in March, in Clermont-Ferrand, in a training room with about ten mortgage brokers. Twenty years on the job for the most senior. He opens his laptop, launches Excel, and installs the Claude plugin in front of us. He turns to the room, hesitates for a second, then types a prompt in plain language: « Build me a complete mortgage simulator, with a detailed amortization table, adjustable monthly payment, variable rate, modular term, total cost insurance included. » A few seconds. The tool is there. Functional. More complete than the one he buys from a specialized vendor every year. He looks at the screen. His colleagues look at the screen. He finally says, almost under his breath: « Did I just do that, or did it? »

The question is worth hearing all the way through. Because this broker did not learn to code. He did not learn advanced Excel programming. He learned neither Microsoft's financial functions, nor conditional structures, nor the constant-amortization algorithm. He learned something else. He learned to know-how-to-have-it-done. And the question he asks, without grasping its reach, is exactly the one this essay sets out to pose.

What do we still call a skill, when the machine, on demand, is now able to summon it on your behalf?

A skill is fluid, and must be managed

You think you are learning. You are retaining skills for the time you will need them.

The persistent intuition is that of a brain-as-hard-drive. We deposit know-how into it, and it stays there, intact, available on call. Fifty years of cognitive neuroscience tell the opposite story. The brain stores nothing in disk-fashion. It maintains circuits through use, and lets them fade as soon as use stops.

Donald Hebb stated the founding principle as early as 1949[1]: « When an axon of cell A is near enough to excite cell B and repeatedly takes part in firing it, some growth process or metabolic change takes place in one or both cells such that A's efficiency [...] is increased. » Carla Shatz turned it into a famous mantra: neurons that fire together wire together. Its corollary is less often quoted, and just as true: neurons that don't fire together drift apart. Neurons that stop firing in unison drift apart. The skill that is no longer summoned comes undone.

Eric Kandel, in the work that earned him the 2000 Nobel Prize in Medicine, showed that long-term memory « results in the growth of new synaptic connections, a structural change that parallels the duration of behavioral memory »[2]. A lasting skill is not a saved file. It is a material neural architecture, that must be maintained through use to survive.

The most compelling evidence of this fluidity remains Bogdan Draganski's study, published in Nature in 2004[3]. Twenty-four adults learned to juggle three balls over three months. Before and after, their brains were scanned by MRI. Grey matter in the visuo-motor regions had significantly grown in the learners, compared to a control group. But the decisive result came three months later. Without practice, the grey matter expansion had decreased, and many participants could no longer juggle. The learned skill had been cerebrally erased. Not metaphorically. Literally. Measured in cubic millimeters.

The reverse phenomenon was quantified at an even more brutal scale by Lotfi Merabet, Alvaro Pascual-Leone, and their team in 2008[4]. Five days are enough to reconfigure a visual cortex deprived of stimulation: subjects blindfolded for five days saw their visual cortex begin processing touch. Twenty-four hours after the blindfold was removed, the reconfiguration faded. The human brain is in permanent renegotiation with its use. That is its normal state, not a pathological exception.

No skill ever sleeps intact. It lives, it wears, it fades.

If that is the case, then the practical question of intellectual work changes nature. Holding in oneself every skill one might need is not just costly. It is cerebrally impossible. The human brain must choose what to keep, what to let slip, what to summon when the need arises. Managing skill fluidity has become a neurobiological imperative, not a professional luxury.

Could there be a tool able to hold, on demand, what the brain can no longer keep in full? The question is tempting, but it is not the right one. Before the tool comes the individual's capacity to arbitrate what they keep and what they let go. Not one more skill to learn. A skill of another order, that would govern all the others.

A skill of another order

A single skill is no longer enough. You need one that governs all the others.

Remember our broker in Clermont-Ferrand. What he mobilized without knowing it, what he is starting to master without yet having the vocabulary for it, is what work-science research has called, for thirty-five years, a meta-competence. The term appears in 1989 under the pen of John Burgoyne, in the context of the British Management Charter Initiative[5]. Burgoyne distinguishes ordinary competences, which let you carry out specific tasks, from a higher-order family of capacities he names « the overarching ability under which competence shelters ».

Reva Berman Brown, in 1993, gives the concept its canonical formulation[6]. The meta-competence, she writes, encompasses higher-order abilities that allow one to learn, adapt, anticipate, and create. Two years later she adds a phrase that became reference:

Meta-competence can be learned but cannot be taught.

It can be acquired. It cannot be taught. Not by a course. Not by a manual. It is built through use, reflective practice, accumulated experience.

Françoise Le Deist and Jonathan Winterton, in 2005, organize this vocabulary in a typology that became the European reference[7]. Four dimensions of competence: cognitive, functional, social, and meta. The fourth dimension is positioned explicitly as what « facilitates the acquisition of the other three. » A second-order skill. One that does not solve a problem, but modifies the very capacity to solve any.

This definition meets another tradition that changes everything. Donald Schön, as early as 1983, was already showing that professional competence is not stored knowledge that one deploys. It is a knowing-in-action that rebuilds itself in every situation, because professional situations are always « uncertain, unique, unstable, and conflicting »[8]. And the raw measurement of skill loss has long been established: the canonical meta-analysis on skill decay, by Winfred Arthur and his team in 1998 across 189 data points, shows an effect size of -1.4 after 365 days of non-practice[9]. In plain terms: a year without using a learned skill, and performance drops back to a level almost equivalent to never having learned at all. What neuroscience observes in MRI, work-psychology measures in performance.

A meta-competence does not perform. It decides what one needs to be able to perform.

This is the definition to keep in hand for what follows. An ordinary competence lets you write an email, calculate a payment, draft a report. A meta-competence lets you decide what you want to write, calculate, or draft, and mobilize internal or external resources to get there. It governs. It does not produce, it orients production. And among its main functions is one no one had named in those terms: to arbitrate skill fluidity. Which to keep in oneself, which to leave within reach without holding, which to summon on demand when the moment comes.

Generative AI meets the criterion

AI is not one more skill. It opens all the others.

In Les 2 Alpes, during a training session, a front-desk attendant composes, with a music AI, an original song to help her three-year-old daughter tidy her room. She has never studied music. In Lyon, two members of Suez's executive committee draft contractual notes in twenty minutes that they used to outsource to a law firm. In Paris, a private citizen dissects his own home insurance proposal, legal clause by legal clause, and asks the machine where the pitfalls are. In Clermont, the broker writes his simulator. All of them, without exception, produced something they would never have produced alone. None of them learned the underlying skill beforehand. The skill came to them, through the machine, on demand.

This observation, repeated hundreds of times in missions and trainings, is now corroborated by the most rigorous studies of the moment. Zhicheng Lin and Aamir Sohail, in Patterns (Cell Press), put it in January 2026[10] with striking accuracy:

GenAI can perform the foundational intellectual activities through which expertise itself develops: reading literature, designing studies, writing arguments, and generating code.

Generative AI carries out the foundational intellectual activities through which expertise itself develops. Every skill that runs through writing, which makes up the dominant share of knowledge work, becomes accessible. Code. Translation. Academic research. Communication. Strategy. Synthesis. Drafting contracts. Reviewing literature. Analyzing commercial proposals.

The numbers anchor the claim. Erik Brynjolfsson, Danielle Li, and Lindsey Raymond measured, on 5,179 customer service agents, an average productivity gain of +14%, climbing to +34% for novices[11]. The effect is equalizing: AI diffuses the best practices of top performers down to the new hires. Fabrizio Dell'Acqua, Ethan Mollick, and their colleagues observed, on 758 Boston Consulting Group consultants, gains of +12.2% in tasks completed, 25% faster, with +40% higher quality[12]. Developers using GitHub Copilot, in a Microsoft controlled experiment with nearly 2,000 engineers, gain between +12% and +22% pull requests per week[13]. In concrete terms, an engineer ships in four days what used to take five, with no change of role, no extra training, no waiting for the hierarchy to authorize anything. AI does not create the skill from scratch. It makes it available on demand. You only need, for that, to master the lever.

Mastering AI is arbitrating, all the time: what I keep inside me because I need it every day, and what I leave within prompt-reach because I will only need it the moment I summon it.

The cycle, in five movements

You think you climb. You loop. And every loop expands you.

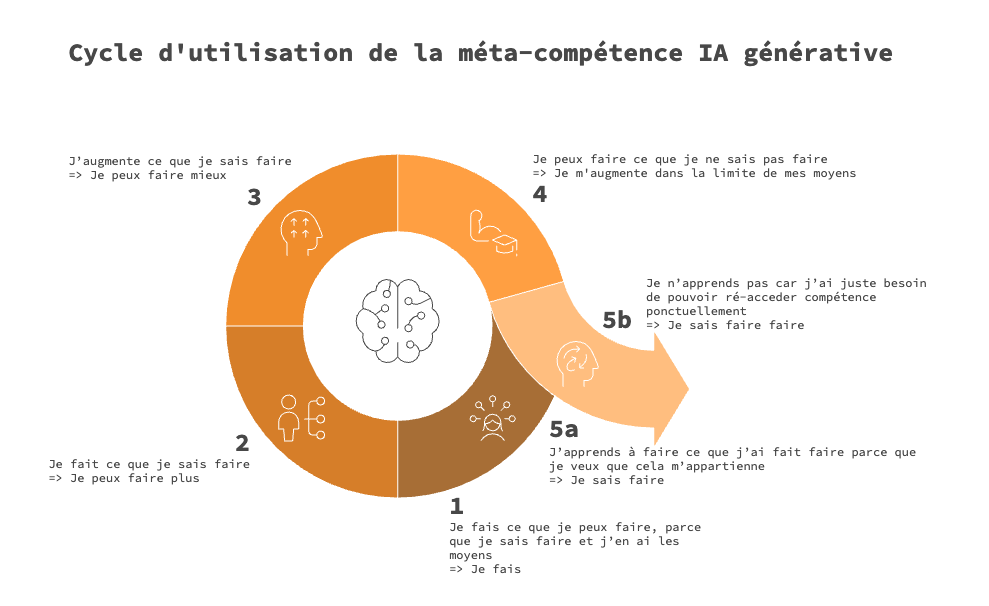

The classical models of technology adoption, since Everett Rogers in 1962, describe diffusion as a slope. Innovators adopt first, followed by early adopters, then by the majority, and finally by laggards. These models work to describe how a product permeates a population. They say nothing about what happens inside an individual who is taking on the meta-competence. Three years of direct observation, in trainings and missions, reveal something else. Not a slope. A movement that comes back on itself, expanding, with each loop, the perimeter of the doable.

Five movements, then. Five, but in a loop. And each one deserves to be unfolded, because each plays out its own dynamic, its accelerators, its brakes, and a trigger that pushes one into the next, or doesn't.

Movement one, I do what I can do

Search engine, upgraded. You are still at the threshold.

The starting point. The user does what they have always done, with the tools they have always had. AI has entered their life, but as a slightly smarter search engine. They ask it for the definition of a term, the explanation of a concept, the translation of an expression. The machine answers, the user copies. Nothing else changes.

The OpenAI economic study published in September 2025 measures this first movement[14]. Across 1.1 million de-identified messages exchanged with ChatGPT, the researchers identified an intent they call Asking: requesting a piece of information or an explanation. This intent represents 49% of conversations.

A reading note matters here. The figure does not say that half the users do only this. It says that half the prompts sent are of this kind. The same user can, on the same day, ask a spelling question and elsewhere launch a more ambitious project. The cycle describes registers of use, not categories of users.

What holds people at movement one is rarely a lack of intelligence. It is a representational brake, as I observe in almost every initiation session. The machine is perceived as oracle, as entity, as a superior intelligence that decides. As long as that representation holds, the user remains a petitioner, never an author. What unlocks the move is exposure to simple use cases that defuse the magic. The machine ceases to be oracle. It becomes a tool. The user can now move forward.

Movement two, I do what I know how to do

You are saving time. You are not yet gaining autonomy.

AI enters the picture, but as an assistant. It accelerates what the user was already doing. Meeting minutes from rough notes. Reminder email to a non-responsive client. Synthesis of a too-long report. Translation of a memo. The user remains master of the object, the machine accelerates production. Same tasks, faster. The perimeter of the doable expands in volume, not in nature.

In the OpenAI study, this intent is called Doing. It represents 40% of conversations. Combined with Asking, it covers nearly 90% of measured uses.

What holds people at movement two is a mix of routine and settled satisfaction. The user has already gained time, which is enough to justify the initial learning effort. They go no further because they don't see why they should. The trigger to movement three, at this stage, is almost always external. A demonstration seen at a colleague's. A personal use that surprises, in the evening, at home. A song composed in two minutes to tidy a child's room. A recipe adapted to a particular diet. The trigger is never professional. It always comes from the other sphere.

This dimension is central, and rarely named. The depth of AI use is interdependent between the personal and professional spheres, which is what information-systems literature has called, since Aurélie Leclercq-Vandelannoitte, the reversed IT adoption logic[15]. Employees transfer their experience of personal technologies into the workplace. With generative AI, this transfer is qualitatively deeper than anything we have seen before. It is no longer a device one brings to the office. It is an augmented cognitive capacity that settles in, everywhere.

Movement three, I expand what I know how to do

You no longer ask. You no longer have it done. You think with.

The user is no longer content to go faster. They do better, or differently. They discover that AI also improves quality, precision, clarity. They think with. They dialogue. They reformulate, they have it reformulated, they argue against their own ideas by submitting them to the machine. Strategic analysis gains in subtlety. The synthesis note gains in structure. The commercial proposal gains in coherence.

In the OpenAI study, this intent is called Expressing. It represents 11% of conversations. Statistically, this is the threshold where the meta-competence becomes active. The user no longer asks, no longer has it done. They think with.

The crossing from movement two to movement three is a threshold. And that threshold is crossed by a recurring profile I encounter in almost every organization where a request for AI training is formulated. A catalyst, almost always alone. High curiosity, willingness to test, a sharper unease in the face of the proceduralization of their craft. This profile corresponds exactly to what work psychology calls, since Bateman and Crant, the proactive personality, and to what Amy Wrzesniewski and Jane Dutton theorized under the name job crafting[16]: the bottom-up capacity to redefine the boundaries of one's own role to align it with one's preferences and needs.

The concept I name BYOA, Bring Your Own AI, that BYOA, what employees do without permission will develop in a dedicated essay, is nothing other than an act of technologically-armed job crafting. The catalyst does not just mentally redefine the role. They concretely automate the procedural parts and claim the meaningful ones. And it is precisely at that moment that they tip into movement three.

Movement four, I can do what I do not know how to do

You cross your own border. No diploma. No permission.

This is the tipping point. The user steps out of their initial perimeter. The mortgage broker who writes a simulator without ever having learned to code. The events officer who builds a dashboard without BI training. The private citizen who dissects an insurance proposal line by line without having studied law. The HR director who drafts a fine-grained legal analysis on a dismissal case without a labour-law degree. The meta-competence is at work, frontally.

This movement is not isolable in the OpenAI numbers, because the statistical volume does not yet allow it. These uses remain very minoritary, but they are growing fast, and field observation confirms it, everywhere, every week. Controlled studies measure it indirectly. When Iavor Bojinov and his team at Harvard observe +67% in conceptualization and +74% in writing on tasks where the user lacked initial expertise[17], that is exactly what they are measuring. With an honest caveat: AI provides the map; navigating the terrain still requires experience the machine does not provide. Movement four does not abolish domain expertise; it expands the perimeter of what the user can take on alone.

What holds people at the crossing from three to four is almost always a brake of self-representation, more than a cognitive brake. The phrase that comes back in training is invariable: « That's not my job. » It is rarely true. It is almost always the echo of an habitus, in Bourdieu's sense, that has traced the contours of the possible before any conscious deliberation. The Clermont broker, before his simulator, would have sworn coding was not his job. He learned, in a few minutes, that it had become his job without his having asked for it.

And here a phenomenon arises that I have seen named nowhere in the research, but that deserves a name: the demonstration shock. Interested participants, who perceive the machine's potential, find themselves in cognitive overload. Strong emotion, refusal to practice, blanket rejection. When you can do too much at once, you no longer want to. The system locks rather than opening. It is the dark counterpoint of the mechanism that Where there's a way, there's a will describes in its luminous version. The meta-competence is also won by dosing.

Movement five, the bifurcation

You have done it. What's left is to choose what's yours.

The decisive moment of the cycle is not movement four. The decisive moment is what comes after. Because there, an old reflex returns, deeply rooted in modern work culture. The reflex to internalize. The idea that a skill is only real if it is integrated, owned, retained.

This belief is the direct legacy of an economy of knowledge scarcity. When access to knowledge was slow, costly, controlled by institutions, then everything within reach had to be learned. What you didn't learn, you lost. That era is over. And the cycle, at its fifth movement, takes note.

Movement five-a. I learn to do what I had it do, because I want it to be mine. The Clermont broker decides to understand how his Excel simulator works. He studies the financial functions the machine mobilized. He owns the constant-amortization logic. The skill becomes his, internalized. It stays with him, even if the machine shuts down. This path is rational for skills he will need on a regular basis, because repeated use justifies the cost of integration.

Movement five-b. I do not learn, because I just need to be able to come back to it when I need it. The same broker decides he doesn't need to understand, because he knows he will be able to regenerate the simulator in two minutes the next time a client asks. He is making a rational choice. He is not lazy. He is saving a limited cognitive resource to spend on something else. What he keeps is the meta-competence, the capacity to have-it-done. What he doesn't bother retaining is the detail. This path is rational for one-off skills, or for those that update on a regular interval. Annual tax filing, whose rules change with every fiscal move. SEO, social-media management, watching algorithms that reshape themselves week after week. These skills are not worth the effort of internalization, because the time of their maintenance would exceed the time of their stability. Having-it-done is more efficient than doing.

A skill does not need to be in you to be yours.

The idea stings. It stings because it contradicts five decades of the pedagogy of effort, of skill-based management, of individual know-how mapping. Yet it is consistent with what cognitive science has called, since Evan Risko and Sam Gilbert, cognitive offloading[18]. Writing a note so as not to memorize. Using a calculator so as not to compute. Asking an AI so as not to draft. The principle is the same. The degree changes.

A nuance defuses the false opposition between five-a and five-b. The motion between them is reversible. A learned skill can, through non-use, slide back into five-b, because neuroscience has shown it on a scanner. The broker who understood Excel's financial functions but did not practice them for three years will have partly lost them. He will have to come back to the machine to have it done again. His five-a will have become five-b again. Conversely, a skill long held in five-b can switch to five-a the day the user decides to integrate it, because they now have a regular use that justifies the cognitive cost of learning. The motion is not frozen. The fluidity of the skill also implies the fluidity of the decision to internalize or not.

The decisive criterion is no longer internalization. It is availability.

This inversion is the real stake of the essay. As long as the skill is available, in oneself or outside, the subject can act. And action is what counts, not possession. The awareness that the skill is accessible unlocks the desire to undertake. That is the cognitive mechanism Where there's a way, there's a will develops in detail.

And the cycle, then, restarts at movement one. More precisely, at a new starting point, because the doable zone has expanded. The cycle resumes, on a wider terrain. And it is that motion, repeated, accumulated, that constitutes the living meta-competence.

Use, with conditions

You can do anything with AI. You cannot do it any way you like.

Everything that precedes rests on a condition I have not yet named. That the use of AI be regulated. Not raw. Not blind. Not magical. Without that regulation, the cycle seizes up, five-b becomes deskilling, and the promised meta-competence undoes itself instead of building.

The risk is measured, and it would be dishonest to hide it. The Microsoft Research team, in a study presented at the CHI conference in 2025, observed across 319 knowledge workers and 936 use cases that the confidence placed in the machine correlates negatively with the mobilization of critical thinking[19]. The more the user trusts, the less they verify. The more they hand over, the more they deskill.

Used badly, AI undoes what it promised to enhance.

Conversely, Zhicheng Lin and Aamir Sohail, in the same publication of Patterns cited above, identify three capacities to deliberately cultivate so that AI keeps its promise[10]. Strategic direction, the ability to formulate a clear intention that guides the machine instead of letting it drift. Critical discernment, the ability to evaluate the output, spot a hallucination, weigh what deserves verification. Systematic calibration, the ability to know when to accept, when to edit, when to reject. With these three capacities, five-b becomes a perfectly legitimate strategy of cognitive allocation, and five-a a true ownership. Without them, five-b becomes metacognitive deskilling, and five-a a fake learning that will only have been copy.

This regulated use is not a side note. It is the threshold that separates real augmentation from the illusion of use. And it deserves an Audace of its own, Generative AI, is your use a good use?.

What is at stake at the scale of organizations

Refusing AI in a company, you think you preserve. You impoverish.

If mastering generative AI is a meta-competence, then organizations that restrict access to it, by mistrust, by slow arbitrage, by legal fear, do not merely deprive their employees of a tool. They block their access to the meta-competence itself. And since this meta-competence is the lever of all the others, the net effect is an amputation of their employees' capacity to learn, to adapt, to redesign themselves.

the one without access to generative AI does not lose a single skill, they lose the capacity to acquire others

With every loop avoided, their doable perimeter shrinks relative to that of equipped peers.

organizations that restrict do not preserve their structure, they install a lasting inequality between those that equip and those that hold back

The balance of power shifts not by scale effects, but by a silent accumulation of meta-competences among those who have access.

The phenomenon is no projection. It is happening, every day, in offices where employees go around their employer by using AI on their personal computer or their phone. That is exactly what The revolution that never happened describes, and what BYOA, what employees do without permission will tackle head-on.

The brake is not cognitive. It is organizational. And it is, at the scale of companies, exactly what the fear of learning is at the scale of an individual: a refusal to close the loop.

If a skill no longer needs to be in you to be yours, who still decides what we call knowing?

Sources

- Donald O. Hebb, The Organization of Behavior: A Neuropsychological Theory, Wiley & Sons, 1949.

- Eric R. Kandel, « The Molecular Biology of Memory Storage: A Dialogue Between Genes and Synapses », Science, vol. 294, no. 5544, 2001.

- Bogdan Draganski, Christian Gaser, Volker Busch, Gerhard Schuierer, Ulrich Bogdahn & Arne May, « Neuroplasticity: Changes in grey matter induced by training », Nature, vol. 427, no. 6972, 2004.

- Lotfi B. Merabet, Roy Hamilton, Gottfried Schlaug et al., « Rapid and reversible recruitment of early visual cortex for touch », PLoS ONE, vol. 3, no. 8, 2008.

- John Burgoyne, « Creating the managerial portfolio: building on competency approaches to management development », Management Education and Development, vol. 20, no. 1, 1989.

- Reva Berman Brown, « Meta-competence: a recipe for reframing the competence debate », Personnel Review, vol. 22, no. 6, 1993.

- Françoise Delamare Le Deist & Jonathan Winterton, « What is competence? », Human Resource Development International, vol. 8, no. 1, 2005.

- Donald A. Schön, The Reflective Practitioner: How Professionals Think in Action, Basic Books, 1983.

- Winfred Arthur, Winston Bennett, Pamela Stanush & Theresa McNelly, « Factors that influence skill decay and retention: A quantitative review and analysis », Human Performance, vol. 11, no. 1, 1998.

- Zhicheng Lin & Aamir Sohail, « Recalibrating academic expertise in the age of generative AI », Patterns (Cell Press), vol. 7, no. 1, 2026.

- Erik Brynjolfsson, Danielle Li & Lindsey Raymond, « Generative AI at Work », The Quarterly Journal of Economics, vol. 140, no. 2, 2025.

- Fabrizio Dell'Acqua, Edward McFowland III, Ethan Mollick et al., « Navigating the Jagged Technological Frontier », Organization Science, 2025.

- Zheyuan Kevin Cui, Mert Demirer, Sonia Jaffe et al., « The Effects of Generative AI on High-Skilled Work: Evidence from Three Field Experiments with Software Developers », Management Science (in press), 2025.

- Aaron Chatterji, Thomas Cunningham, David J. Deming et al., « How People Use ChatGPT », NBER Working Paper No. 34255, September 2025.

- Aurélie Leclercq-Vandelannoitte, « Managing BYOD: How do organizations incorporate user-driven IT innovations? », Information Technology & People, vol. 28, no. 1, 2015.

- Amy Wrzesniewski & Jane E. Dutton, « Crafting a job: Revisioning employees as active crafters of their work », Academy of Management Review, vol. 26, no. 2, 2001.

- Luca Vendraminelli, Iavor Bojinov et al., « The GenAI Wall Effect », Harvard Business School Working Paper No. 26-011, 2025.

- Evan F. Risko & Sam J. Gilbert, « Cognitive Offloading », Trends in Cognitive Sciences, vol. 20, no. 9, 2016.

- Hao-Ping Lee, Advait Sarkar, Lev Tankelevitch et al., « The Impact of Generative AI on Critical Thinking », Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems, ACM, 2025.